April 20, 2020

Training Deep Learning Models With Google Cloud

Author:

CEO & Co-Founder

Reading time:

4 minutes

Google Cloud Virtual Machines are excellent for training deep neural networks with the usage of a myriad of CPUs and GPUs. Thanks to $300 for new customers you can try training deep learning models by yourself, but in order to be allowed to use GPUs, you need to upgrade your account ($300 bonus doesn’t disappear) to its full powers (where you also need to pay for your activity).

Training Deep Learning models with Google Cloud

Creating a virtual machine

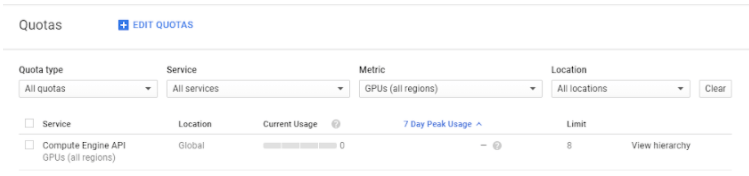

To acquire access to GPU there is also a necessity to increase a quota called “GPUs (all regions)”. Google gives access manually after written application so you need to be creative and patient.

After a successful response you should see your limit increased like in below example:

The specialized quotas for every GPU type should increase as well.

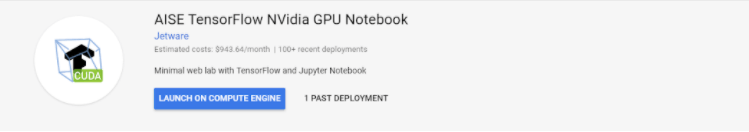

Next, it is time to create a VM instance. Because installing CUDA for NVidia GPU can be a nightmare for newcomers we recommend using free instance from the marketplace with pre-installed libraries (like Tensorflow and Keras) called AISE Tensorflow NVidia GPU Notebook.

After clicking on the ‘Launch on compute engine’ button we can modify machine parameters such as the number of cores and graphic cards and the size of memory. Please pay attention to a cost estimate, powerful machines can be very costly. Types of available resources depend on region and zone – if you need a particular configuration not available in a given zone or region to try others.

You might find it interesting Deep Learning vs Machine Learning

If you can cope with the additional limitation that your resources can be taken from you at any moment you can mark ‘preemptible’ option which gives you a great discount (remember to often save your work).

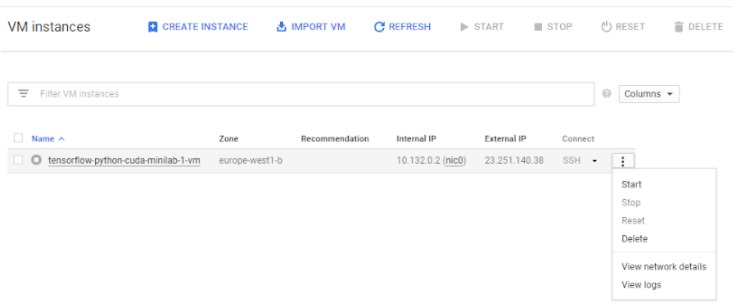

Now your Virtual Machine instance is ready. After turning it on Google starts billing your account.

You can connect with VM instance via SSH or by clicking the SSH button, then in a browser window, you will acquire access to VM terminal. As a welcome message, the AISE instance will print Python libraries currently installed.

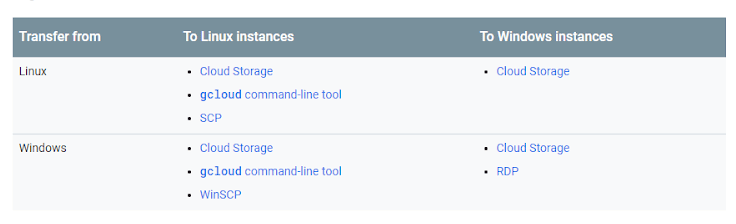

Data transfer

There are several ways of transferring data to the VM. Available possibilities depend on what and to what operating system (Windows or Linux) data transfer is needed to be done. Choose the method according to the table:

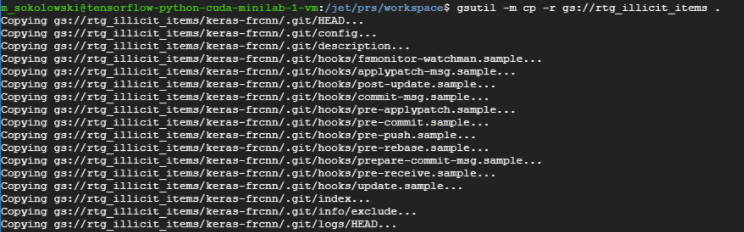

For all combinations fully available is the Cloud Storage, so after creating a bucket and inserting files in it you can transfer data to instance using :

gsutil mb gs://[BUCKET_NAME]/

and from instance to bucket:

gsutil cp [LOCAL_OBJECT_LOCATION] gs://[DESTINATION_BUCKET_NAME]/

You are also able to use Github to conveniently transfer data between VM and repository.

Protection from disconnection

One of the main problems with using Virtual Machine for a long time is connection stability especially during training the model. To protect yourself from disconnection we recommend using a terminal multiplexer called GNU Screen. It should be already available in the AISE instance. After typing

screen

you will see:

A new terminal appeared where you need to run your time-consuming program and then click Ctrl + A and Ctrl + D to return to the previous terminal. Now you are safe from stopping your program. To show again your computations type:

screen – r

Training deep learning models

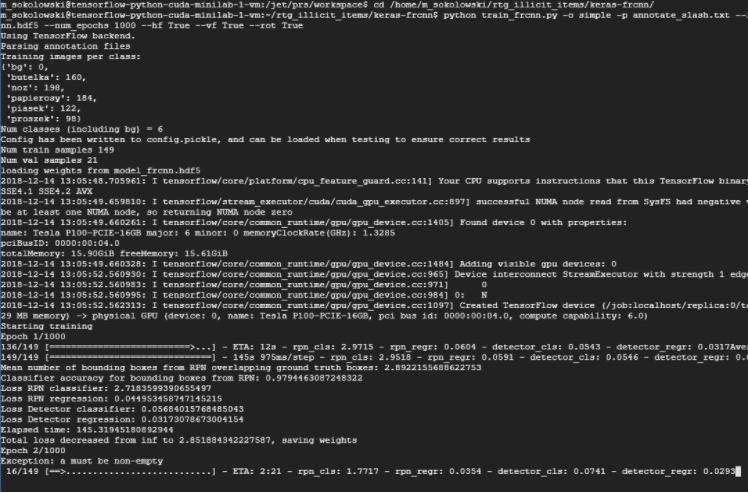

As an example of training deep neural network model, we use Faster RCNN architecture implemented in Keras to find illicit items in RTG imagery data. We run a training script in Screen terminal:

python train_frcnn.py

As you can see there is one Tesla P100 used for training.

Please remember to click Ctrl + A and Ctrl + D otherwise you can be (and eventually will be) disconnected and training will stop.

After all, computations are finished you need to stop the instance in order to release resources and stop billing your account.

Have a nice time training your deep learning models!

Visit Addepto Blog for more articles reflecting similar topics.

Category: